Dive into the controversial rise of Cluely AI. How founders Roy Lee and Neel Shanmugam weaponized virality to turn a cheating hack into a $120M startup.

Startup Stories

Author: Jimmey Barnwal | December 10, 2025 | 5 min. read

In one version of the future, disruption looks like elegant APIs, quiet offices and careful whitepapers. In another, it looks like a dorm-room hack that doubled as a dare: build something that lets you win when the rules are stacked against you.

Cluely — the AI company cofounded by Roy Lee and Neel Shanmugam — belongs to the latter. In less than a year it went from clandestine overlay tool to a viral phenomenon, a $120 million valuation, and a cultural Rorschach test: brilliant product-market timing or a rampaging ethical catastrophe.

This is the story of how two expelled Ivy League students weaponized controversy into capital, and the heavy questions that followed.

The Cluely Ai Saga: How a Cheating Tool Became a $120M AI Giant

It began, like many modern origin myths, with one frustrated student and one idea that didn’t ask permission.

Roy Lee—then a Columbia undergrad—grew tired of the sweat-and-nerve gauntlet that is modern technical interviewing.

In early 2025 he sketched a solution: an invisible, real-time overlay that listened, analyzed and surfaced answers during video interviews and coding sessions.

An early prototype, nicknamed InterviewCoder, fed solutions into the user’s environment in a way that aimed to be undetectable.

Word spread fast. For many anxious students the overlay was a cheat code; for their universities it was academic subversion.

Columbia suspended and then expelled Lee. Harvard rescinded an offer. Rather than disappear, Lee leaned into the branding: “If they call it cheating,” he later said in interviews, “why not own it?”

Enter Neel Shanmugam. Also recently out of the Ivy system after his own clashes with academic integrity rules, he paired Lee’s provocation with operational discipline. Together they moved the project to San Francisco, incorporated, and rechristened the product under a single, defiant name: Cluely.

Bootstrapping the Beast: Converting a Dorm Hack into an MVP

The first phase was simple and lean: open-source models, cloud credits, a translucent overlay that read screens and produced prompts.

The goal wasn’t to build perfect AI; it was to build something that worked—and that could be shared secretly among peers.

They refined the overlay to work across common interview environments, then broadened the concept: why stop at interviews? Sales calls, meetings, even dates—what if the overlay could “cheat” across life’s high-pressure moments?

Lee and Shanmugam launched an MVP in spring 2025 and, critically, began seeding underground buzz.

The tool’s early adopters were students and young professionals willing to trade authenticity for an edge.

The result: explosive grassroots adoption long before the company ever went public with a product.

Engineering outrage: virality as a deliberate strategy

Cluely didn’t accidentally go viral. It weaponized virality.

Lee—adept at provocation—ran what one analyst later called a “rage-bait” marketing machine. Raw, edgy TikToks, staged cheating stunts and a relentless content army of young creators flooded the attention economy. The team spent heavily: six-figure budgets on single videos, a reported $1.5 million on an ostentatious product-launch rave, and sizable retainers for influencers. Lee’s maxim—“If half your audience doesn’t hate you, it’s not viral enough”—was more than bravado; it was strategy.

That strategy worked. A waitlist ballooned to 150,000 people. Billions of views followed. But virality had a cost: it turned a functional tool into a social lightning rod, inviting intense scrutiny from universities, employers and regulators.

The ethics storm: is innovation an excuse for deception?

Cluely’s central claim—redesigning “cheating” as leverage—placed it squarely in a moral minefield.

Supporters argued Cluely was an augmentation tool, akin to calculators or spellcheck, leveling the playing field for those disadvantaged by traditional gatekeeping. Critics saw something bleaker: an app that devalued effort, enabled misrepresentation, and eroded trust in credentialing systems. If someone aced a technical interview using an overlay, would they actually perform on the job? The stakes aren’t hypothetical; misplaced confidence in skills can have cascading harms in software, healthcare, finance and other fields where competence matters.

Ethicists and mentors asked hard questions. Was intentionally enabling deception a legitimate path to product-market fit? Or did Cluely cross an irreversible line—sacrificing civil norms for growth metrics?

Privacy, GDPR and the reality of detection

Beyond philosophy there were hard facts. Cluely recorded and processed data during live calls and interviews—often without explicit consent from all parties involved—raising alarm bells about privacy laws like GDPR and various U.S. state statutes. Users reported being penalized when their use was detected during screen-shares; engineers outside the company built detectors and countermeasures that exposed the product’s limitations.

Company promises of “maximal undetectability” were contradicted in practice. That gap between marketing and reality fed allegations of false advertising and consumer harm. A further flashpoint: a bug-bounty program that reportedly pressured researchers to remove public disclosures as a condition of payment. To the security community, that looked less like responsible disclosure and more like muzzling critique—an ethical and PR catastrophe.

Rage-bait marketing: attention, money, and reputational consequences

Cluely’s content-first approach turned outrage into fuel. The company’s social strategy packed its top-line metrics, but also produced a polarizing brand.

For every applauding underdog there was a vociferous critic calling the brand “unethical slop.”

Investors noticed the numbers: billions of views, rapid signups, and tens of thousands of paid subscribers.

But other stakeholders—enterprise buyers, universities, regulators—looked at the brand and hesitated.

Could a company that celebrated deception sell trust to enterprises and governments?

The tension between attention and trust is the story of Cluely’s pivot attempt.

The pivot paradox: Switching from “Cheat” to Enterprise Productivity

By mid-2025,

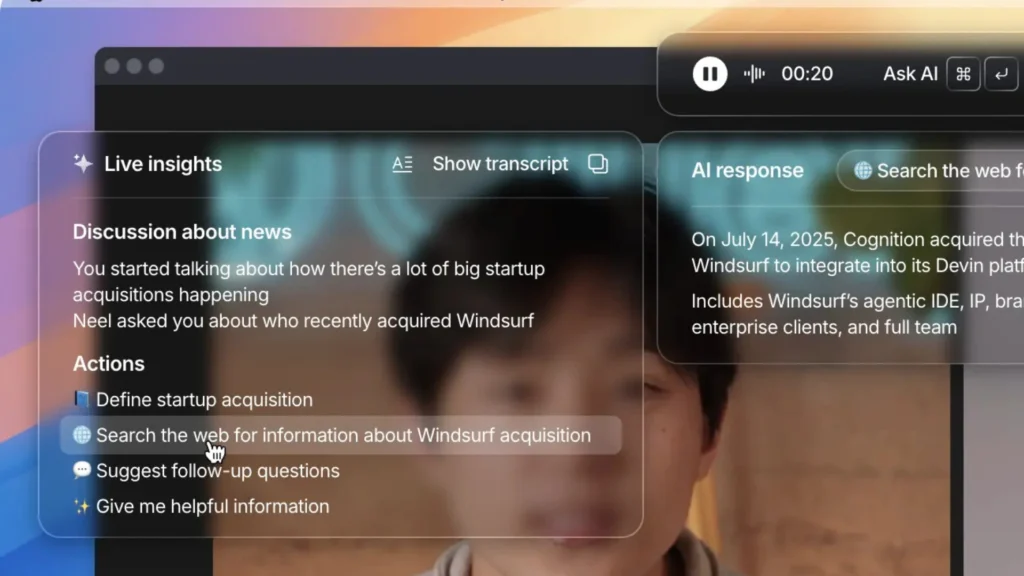

Cluely publicly shifted gears: the overlay was reframed as an enterprise-grade AI assistant—real-time note-taking, context-aware meeting aids, transcript-based knowledge recall.

The company claimed the pivot produced $7M in ARR and even argued it had reached profitability.

Skeptics were unconvinced.

Was this a genuine evolution of product utility—or a PR maneuver to sanitize a scandal-ridden brand and chase sustainable revenue?

Some observers speculated Cluely had engineered controversy to build a funnel: create outrage, convert attention to signups, secure funding, then rebrand.

If true, that would be a deliberate exploitation of virality to exit the moral consequences for returns on capital.

Financials and Funding: The Andreessen Horowitz Effect

Cluely’s financial journey was lightning fast.

A $5.3M pre-seed followed by a $15M Series A led by Andreessen Horowitz catapulted the company to a $120M valuation in June 2025.

With capital in hand, the founders spent aggressively—talent packages with eye-popping salaries, office upgrades, merch and marketing stunts that fed the content machine.

These expenditures powered growth but strained credibility. Analysts and independent researchers questioned the sustainability of proud marketing pushes when product reliability and legal exposure were unresolved.

Were investors buying into durable tech or the narrative of contrarian founders who could manufacture headlines?

Cluely in Late 2025: Current Status and Future Outlook

Numbers tell two stories: the headline and the footnote.

Cluely reported rapid adoption—tens of thousands of subscribers and claims of millions in ARR. A mid-2025 enterprise push reportedly expanded revenue dramatically in a short span. Yet transparency was limited.

Public DAU numbers were scarce; founders stopped sharing granular metrics after an earlier spree of flashes and teasers. Independent estimates suggested ARR growth but also flagged operating expenses that potentially outpaced MRR—calling into question the company’s profitability claims.

Product bugs, platform incompatibilities (e.g., Linux access issues), and the churn of users disappointed by overhyped promises hinted that virality had not fully translated into sticky product-market fit.

Where they stood as of December 9, 2025: pivot, perimeter or peril?

By late 2025 the company had relocated from San Francisco to New York, a move framed as strategic and cloaked in whispers of regulatory pressure.

The public face—Roy Lee—continued to embrace controversy in interviews and conference stages, while Neel Shanmugam focused on R&D and product hardening.

Cluely’s brand rhetoric softened in places: overt “cheat-on-everything” language gave way to enterprise messaging about productivity and context-aware assistance.

Yet the core questions remained. Can a company born from a callous embrace of cheating ever fully convince enterprise buyers and regulators of its ethical maturity?

Will legal complaints from universities, privacy regulators, or employers materialize in ways that curtail growth?

Or will the company convert its explosive early growth into a durable, ethical product that rides the same AI wave as more conventional assistants?

Key Lessons from the Cluely experiment

Cluely’s arc is a compressed case study in modern startup dynamics:

- Virality is a double-edged sword. It accelerates growth but amplifies scrutiny—especially if your product flirts with social norms.

- Narratives can be assets—and liabilities. Positioning yourself as an anti-system hero can win attention but can also close doors to institutional customers and invite legal risk.

- Product reliability matters more than hype. Bold claims about undetectability create expectations that, if unmet, cause reputational and legal damage.

- Ethics and engineering are inseparable. In the age of real-time AI, decisions about what a product enables are as consequential as how well it runs.

- Investors underwrite story as much as software. Venture dollars can escalate a risky concept into a high-stakes experiment with public consequences.

The final act? (For now)

Cluely’s story—so far—doesn’t end in a dramatic collapse or a classic “clean pivot” redemption.

It ends in tension: a company sitting on a high valuation, with real revenue and real engineering talent, yet haunted by origins that many find morally dissonant.

The trajectory ahead is uncertain. Will regulators hound them into conservative stewardship? Will enterprise adoption outpace outrage? Or will Cluely double down, courting controversy as a lasting growth lever?

One thing is clear: Cluely forced a conversation that was simmering under the surface of modern work and learning.

In an era where AI can readily augment, automate, or obscure human action, we are being asked to decide not only what machines can do, but what we want machines to enable us to be.

Cluely chose provocation; the rest of us must decide whether that provocation is a necessary abrasive for change—or a dangerous eroding of trust.

Epilogue: a cautionary parable for the attention economy

Cluely’s rise is emblematic of a larger truth about 2020s tech: attention is currency, and some founders will architect products to buy it. Where that strategy becomes hubris is when attention substitutes for accountability.

The company may survive, pivot, or disintegrate—but its legacy is already written into startup lore: a cautionary tale about how virality can build empires overnight and ethical debt even faster.

Whether Cluely ultimately becomes a case study in smart rebranding and product maturation or a warning label in the annals of Silicon Valley missteps depends not just on engineers and investors, but on regulators, employers, educators—and, crucially, on the collective decision about what counts as achievement in an AI-augmented world.

Best of Infloia’s Voice;

1. Sanchar Saathi app: voice.infloia.com/sanchar-saathisurveillance-privacy-risks-india

2. Cristiano Ronaldo and Perplexity AI: voice.infloia.com/the-curiosity-engine-why-cr7-is-betting-on-perplexity

3. The great Indian IPO scam: voice.infloia.com/the-great-indian-ipo-scam